Our projects

We address Human-Computer Interaction methods which pose the user at the center of the entire development process. Our team designs and develops frameworks to enhance the life of people by leveraging advanced Web technologies, conversational AI and IoT.

-

Conversational AI

-

Co-design & Making Toolkits

-

Smart Nature

-

Sport Inclusiveness

CONWEB

Conversational agents (CAs) are pervading a broad range of activities, as their natural language (NL) paradigm simplifies the interaction with digital systems. They offer benefits in different situations where users may take advantage of voice-based interaction for accomplishing their tasks. Recent works are capitalising on this technology, for example to design voice-based CAs for searching the Web. However, very often CAs are seen as tools that complement the Web access experience by providing additional content, not granting access to the website content itself. There is still a lack of proposals for a full-fledged integration of Conversational AI within Web architectures. This project tries to fill this gap by proposing ConWeb, a framework for Conversational Web Browsing that responds to requirements that we identified through an extensive human-centred process involving 26 blind and visually impaired (BVI) users, along different sessions of interviews and co-design workshops. ConWeb enables users to navigate content and services accessible on the Web by “talking to websites” instead of browsing them visually, by expressing their goals in natural language and accessing the websites through a dialog mediated by a conversational agent (e.g., a voice-based browser plugin).

Awards

Publications

CODEX

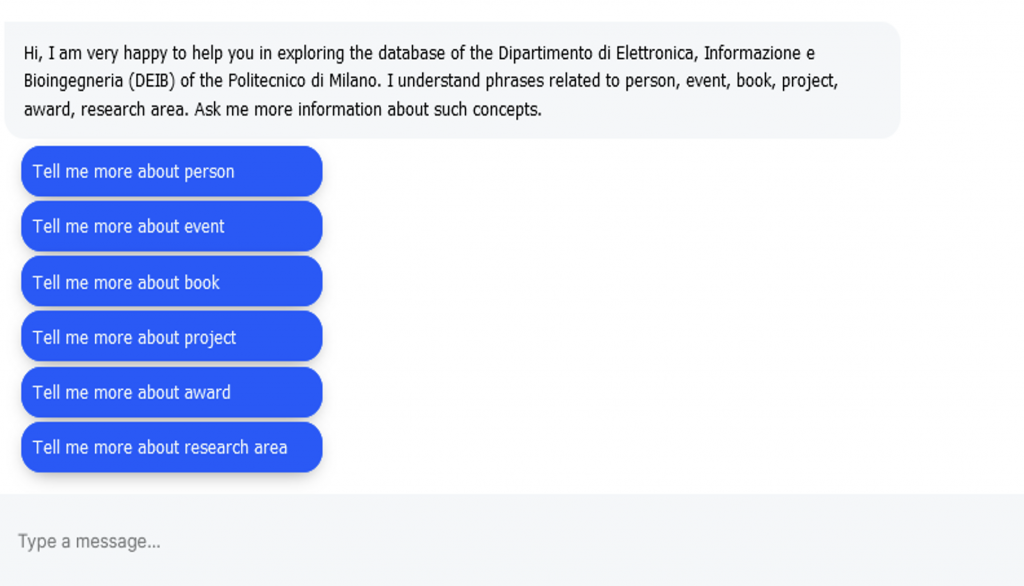

Conversational Agents mainly support simple tasks for retrieving very specific data items, while it is still unclear how they can be applied for interaction with large bodies of information. Codex presents a design approach whose key ingredient is a modeling technique that facilitates filtering relevant information entities and mapping data elements onto conversational elements. Once a dataset is properly tagged, a conversational agent is automatically generated, and the users can simply ask the agent for an information instead of having to create queries, getting back data intelligence and graphics. Other than that, CODEX relies on a set of conversational patterns for the exploration of datasets that help the user better understand the reality represented by the data. The approach can be useful for i) non-technical users to explore a database content without any knowledge of specific query languages and ii) database experts to explore a database with performance and satisfaction comparable to the use of query languages.

Publications

MAKENODES

MakeNodes is a toolkit designed to help people with intellectual disabilities to participate in workshops that create smart things and spaces in an easy, guided, and engaging way. The toolkit includes a series of input and output nodes that can be paired and placed around to make things become smart and interconnected. By using the MakeNodes, people can reflect on daily problems and challenges and attempt to resolve them by also reflecting on peer-to-peer and Internet of Things (IoT) technologies implications. MakeNodes encourages collaboration and creativity, making it a valuable tool for anyone interested in exploring the possibilities of smart technology.

Publications

IOTOGO 4 INCLUSION

Making tools, coupled with card-based tools are often employed for designing with their end users smart things, that is, every-day’s physical things augmented with electronics. The potential benefits of prototyping them for people with intellectual disabilities has attracted the interest of HCI researchers. However, limited efforts have been devoted to smart-thing prototyping toolkits for them. The work illustrated in this paper tries to tackle this gap. It presents a toolkit for people with intellectual disabilities to rapidly prototype together their own ideas of smart things, for their environment. By discussing the results of two workshops with it, with eight users with intellectual disability, this paper analyses and discusses aspects of the toolkit that (dis)engaged them in design with prototyping, with or without assistance. Lessons of broad interest for the design of similar toolkits are drawn from the literature and study findings.

Publications

COBO

COBO is phygital interactive toolkit for co-designing interactive outdoor experiences and intended for people with intellectual disability. The toolkit consists of an interactive board and a dedicated tablet application, with which the user can interact during all stages of the game and which is able to provide information and advice. The interactive board is able to recognize the card combinations made by users and permits to test them through a series of interactive functions, which make the effects on reproductions of the park elements visible.

Publications

EKO

Studies in the last decades have highlighted how children spend less time outdoors, while they are increasingly attracted by screens and indoor digital activities. Based on the assumption that technology and open-air activities are not necessarily mutually exclusive, this article illustrates the design of integrated physical-digital systems motivating children to connect with nature and learn from it. A key ingredient is the provision of unobtrusive technology supporting playful, creative, and educational outdoor experiences without distracting children from their experience with nature. Based on the insights gathered through design-based research, the article also outlines design dimensions that aim to refine the theory and practice of technology for children-nature interaction.

Publications

GAIA

GAIA is a screen-less tangible device. It is a band that can be attached to outdoor elements (for example trees or even street lamps in parks). Each band has four disks that emit light and react with sounds and audio. When a child touches the buttons, GAIA tells stories that guide children on a treasure hunt asking them to look for specific elements in the outdoors, for example, trees belonging to a given species. The treasure hunt is collaborative by its nature; by solving it together, children are led to explore the nature around them.

ABBOT

ABBOT is an interactive smart toy, born to stimulate kids around 5/6 y.o. to play outdoor and explore natural elements. In this way they can “catch textures” and become true explorers and start their own material collection! It is a pervasive interactive game for children at the early years of primary school that aims to stimulate exploration of outdoor environments. It’s a screen-free smart toy made of natural materials. ABBOT combines a smart tangible object to play outdoors, with a mobile app to access new content related to the discovered natural elements. The tangible object helps children capture images of the elements they find interesting in the physical environment. Through simple interactive games on a tablet, at home children can continue to interact with the collected digital materials and can also access new related content.

Publications

SNaP

SNaP (Smart Nature Protagonists) is a collaborative card-based game, with ideas taken from traditional board game design, that guide children in the co- design of smart objects for nature exploration. It addresses children aged from 8 years old and aims to include them in the design practice of augmenting outdoor environment objects. Its main elements are nature cards and a game board, plus a set of gaming rules that inspire children in the ideation of smart objects.

Publications

VIBS

Practising sports, and in particular, swimming has several

positive effects, among which improving individuals’ perception of the

self and therefore their control over their own body. Nevertheless, the

swimming accessibility is very low for people with visual impairments.

In order to address this issue, this paper proposes VIBS, a touch-sensitive swimming cap that aims to make visually-impaired swimmers feel safe and independent. VIBS is the result of a human-centered process that, involving 3 Paralympic swimmers, allowed us to collect insights informing its design. It also discusses the results of a preliminary evaluation that highlighted the potential of the designed technologies and directions for extensions.

Publications